News

Article

Chatbots: The Stethoscope for the 21st Century

Author(s):

Here’s how placing current debates within historical context can help us better understand the potential trajectory of artificial intelligence in psychiatry and medicine.

ipopba_AdobeStock

COMMENTARY

The rapid integration of technology into health care often triggers a complex interplay between tradition and innovation. Controversies surrounding the clinical use of chatbots like ChatGPT, Bing AI, and Bard are but the latest example in this ongoing tension.

Placing current debates within historical context can help us better understand the potential trajectory of artificial intelligence (AI) in psychiatry and medicine.

The Stethoscope: A Pioneering Tale

The stethoscope was invented by the French physician René Laennec in 1816, and its acceptance was far from immediate. Here are some reasons for this resistance:

- Traditional technique preference: Many physicians were accustomed to the practice of immediate auscultation, where they would place their ear directly on the patient's chest to listen to the heart. This method was deeply ingrained in medical practice, and there was resistance to change.

- Perceived lack of necessity: Some physicians believed the stethoscope was an unnecessary gadget and that their unaided ears were sufficient for diagnosis. They questioned whether the stethoscope provided any substantial advantage over direct ear auscultation.

- Social and cultural barriers: The act of placing one's ear to the chest of a female patient was considered inappropriate by some societal standards of the time. Although the stethoscope could have alleviated this issue, some physicians found the device itself to be impersonal and believed it might hinder their relationship with the patient.

- Lack of training and understanding: Using a stethoscope required a new set of skills, and many physicians were not initially trained in its use. The sounds heard through a stethoscope were different from what they were used to hearing directly and required interpretation.

- Skepticism of new technology: As with many technological advancements, there was general skepticism about the stethoscope's reliability and efficacy. Some saw it as a threat to traditional medical wisdom and expertise.

- Quality and availability: Early stethoscopes varied widely in quality, and not all were effective in amplifying sound. Additionally, they were not readily available to all physicians, contributing to resistance.

- Famous resisters: Well-known figures in medicine publicly resisted the stethoscope. For example, Pierre Potain, MD, a prominent French physician, was known to have preferred direct auscultation even after the stethoscope became popular.

Chatbots in Psychiatry: A Modern Debate

Pros

- Accessibility: One of the most compelling arguments in favor of chatbots is their ability to provide immediate, round-the-clock psychological support, especially to individuals who might not otherwise have access to mental health care services.1

- Cost-effectiveness: Chatbots can be a less expensive alternative to traditional psychiatric services, helping bridge the gap in resource-limited settings.2

- Anonymity and reduction of stigma: Online platforms allow patients to seek help anonymously, potentially mitigating the stigma associated with mental health treatment.3

- Data gathering: Chatbots can be programmed to collect valuable data, which could lead to a more tailored and personalized treatment approach.4

Cons

- Diagnostic limitations: Chatbots currently lack the capability to fully understand human emotions and nuances, leading to concerns about diagnostic accuracy.5

- Lack of human empathy: Although chatbots can simulate conversations, they cannot replicate the empathetic human connection that is often crucial in psychiatric treatment.6

- Data privacy and ethical concerns: The use of AI in health care raises significant ethical and privacy concerns, particularly related to data security and patient confidentiality.7

- Potential for misuse: There is a risk that some individuals might over-rely on chatbots, neglecting to seek professional medical advice for serious mental health issues.8

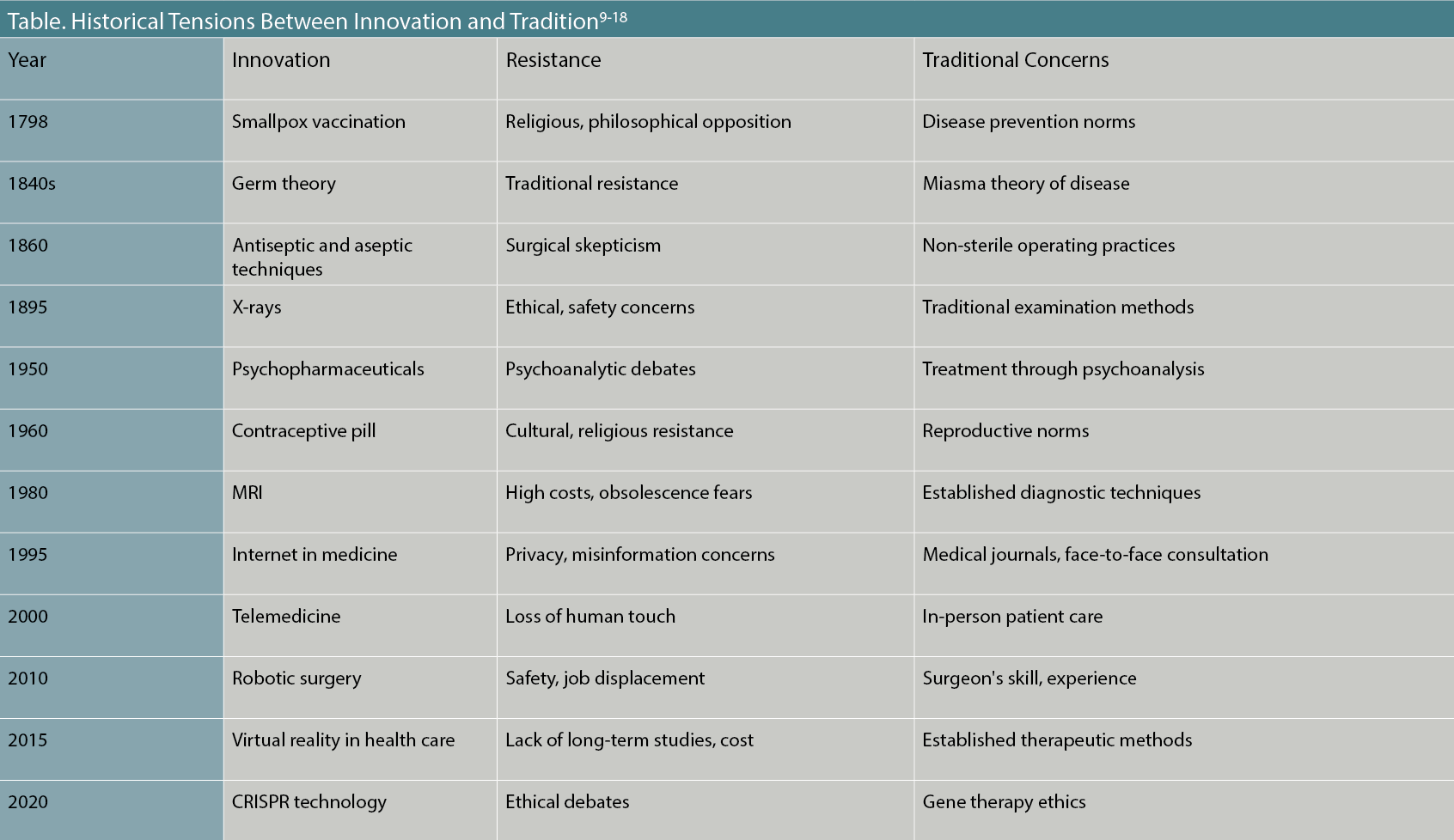

The debate around chatbots in psychiatry often focuses on these key points, echoing sentiments of past resistance to new technological implementations in health care. From concerns about a tool's effectiveness and ethical considerations to worries about the potential dehumanization of health care, these criticisms reflect broader historical trends and attitudes toward innovation in medical practice. Some other historical tensions between innovation and tradition are listed in the Table.9-18

Table. Historical Tensions Between Innovation and Tradition9-18

Concluding Thoughts and Future Implications

The enduring tension between tradition and innovation in psychiatry and medicine serves as a poignant backdrop for the ongoing debate around the integration of chatbots and other AI tools in health care settings.

Whether viewed through the lens of the slow adoption of the stethoscope or the ethical debates surrounding CRISPR technology, the essential dynamics remain the same: New technologies challenge existing norms, disrupt comfortable routines, and generate both enthusiasm and skepticism.

However, history also teaches us that these tensions are often temporary, settling down as the utility and effectiveness of innovations become more evident. Technologies that initially seemed disruptive—such as telemedicine—have found their place in the health care ecosystem, especially when they complement rather than replace human skills and judgment.

In this context, it is reasonable to assume that AI and chatbots will continue to evolve, and as they do, their capabilities will likely become more nuanced and their applications more accepted.

It is also worth noting that ethical considerations and challenges, while significant, are not static. Just as the medical community has worked to address the ethical dilemmas presented by previous innovations—be it the standardization of aseptic surgical techniques or the establishment of protocols for radiological imaging—similar efforts are underway to mitigate concerns about data privacy and ethical considerations associated with AI and chatbots.

Looking forward, the use of AI and chatbots in psychiatry and medicine offers intriguing possibilities. From the democratization of health care access to the provision of real-time diagnostic support, these technologies have the potential to significantly impact how health care is delivered.19,20

At the same time, they pose questions that extend beyond efficacy and utility, challenging our perceptions of what it means to provide health care, and what roles humans and machines should play in this vital aspect of human life.

The key, as always, will be balance. We must navigate these uncharted waters with caution and consideration, but also with an openness to the transformative possibilities that lie ahead. In doing so, we honor both the traditions that have served us well and the innovations that promise a better future.

Dr Hyler is professor emeritus of psychiatry at Columbia University Medical Center.

References

1. Fogel AL, Kvedar JC. Artificial intelligence powers digital medicine. NPJ Digit Med. 2018;1:5.

2. Ziegelmayer S, Graf M, Makowski M, et al. Cost-effectiveness of artificial intelligence support in computed tomography-based lung cancer screening. Cancers (Basel). 2022;14(7):1729.

3. Lee Y-C, Yamashita N, Huang Y, Fu W. “I hear you, I feel you”: encouraging deep self-disclosure through a chatbot. In: CHI ’20: Proceedings of the 2020 CHI Conference on Human Factors in Computing Systems. April 2020:1-12.

4. King MR. The future of AI in medicine: a perspective from a chatbot. Ann Biomed Eng. 2023;51(2):291-295.

5. Hirosawa T, Harada Y, Yokose M, et al. Diagnostic accuracy of differential-diagnosis lists generated by generative pretrained transformer 3 chatbot for clinical vignettes with common chief complaints: a pilot study. Int J Environ Res Public Health. 2023;20(4):3378.

6. van Wezel MMC, Croes EAJ, Antheunis ML. “I’m here for you”: can social chatbots truly support their users? a literature review. In: Lecture Notes in Computer Science (LNISA, volume 12604). February 3, 2021.

7. Kooli C. Chatbots in education and research: a critical examination of ethical implications and solutions. Sustainability. 2023;15(7):5614.

8. Demirkol O. NEDA did not forgive Tessa’s mistake and terminated the AI chatbot after the backlash. Dataconomy. June 2, 2023. Accessed October 5, 2023. https://dataconomy.com/2023/06/02/eating-disorder-helpline-tessa-chatbot/

9. Ruderman DB. Some Jewish responses to smallpox prevention in the late eighteenth and early nineteenth centuries: a new perspective on the modernization of European Jewry. Aleph: Historical Studies in Science and Judaism. 2002;2(1):111-144.

10. Pannell LB. Viperous breathings: the miasma theory in early modern England. West Texas A&M University. Master’s thesis. February 20, 2017. Accessed October 5, 2023. https://wtamu-ir.tdl.org/items/ad6f4a8c-e57b-49c3-a3fd-646139b9d85e

11. Nakayama DK. Antisepsis and asepsis and how they shaped modern surgery. Am Surg. 2018;84(6):766-771.

12. Mould RF. A Century of X-Rays and Radioactivity in Medicine With Emphasis on Photographic Records of the Early Years. Routledge;1993.

13. Silvio JR, Condemarín R. Psychodynamic psychiatrists and psychopharmacology. J Am Acad Psychoanal Dyn Psychiatry. 2011;39(1):27-39.

14. Glass RH. Editorial: oral contraceptive agents. West J Med. 1975;122(1):66-67.

15. Edelman RR. The history of MR imaging as seen through the pages of radiology. Radiology. 2014;273(2 Suppl):S181-S200.

16. Greene JA. The Doctor Who Wasn’t There: Technology, History, and the Limits of Telehealth. The University of Chicago Press;2022.

17. George EI, Brand TC, LaPorta A, et al. Origins of robotic surgery: from skepticism to standard of care. JSLS. 2018;22(4):e2018.00039.

18. Shinwari ZK, Tanveer F, Khalil AT. Ethical issues regarding CRISPR mediated genome editing. Curr Issues Mol Biol. 2018;26:103-110.

19. Gopal G, Suter-Crazzolara C, Toldo L, Eberhardt W. Digital transformation in healthcare - architectures of present and future information technologies. Clin Chem Lab Med. 2019;57(3):328-335.

20. Woods M, Miklenčičová R. Digital epidemiological surveillance, smart telemedicine diagnosis systems, and machine learning-based real-time data sensing and processing in COVID-19 remote patient monitoring. Am J Med Res. 2021;8(2):65-77.

Newsletter

Receive trusted psychiatric news, expert analysis, and clinical insights — subscribe today to support your practice and your patients.